Starting a new project on AWS feels exciting right up until you open the billing console and realize how fast small experiments can turn into real spend. A test database stays up overnight. An EC2 instance keeps running after a demo. A founder tries Bedrock, Lambda, and S3 in the same week, then wonders whether the “free” part is already gone. That’s the moment many users start searching for free aws credits.

The good news is that there are legitimate ways to get them. The bad news is that many guides mix old programs, vague eligibility advice, and claims that don’t hold up once you read the actual terms. That creates the wrong expectations. Teams assume credits will cover everything, or that getting them is the hard part when the main challenge is stretching them long enough to learn something useful.

This guide focuses on what works. It covers the official AWS paths for new users, startup programs, nonprofit and research routes, migration funding, and selective accelerator opportunities. Just as important, it calls out the trade-offs. Some options are broad but small. Some are generous but highly selective. Some are useful only if your organization already fits a specific profile.

If you're trying to get free aws credits in 2026, treat them as temporary runway, not as a cost strategy by themselves. The teams that get the most value usually do two things well. They choose the right credit program for their stage, and they put controls in place before the credits start burning. That means budgets, alerts, tagging, and some form of automated shutdown for idle compute.

1. AWS Free Tier

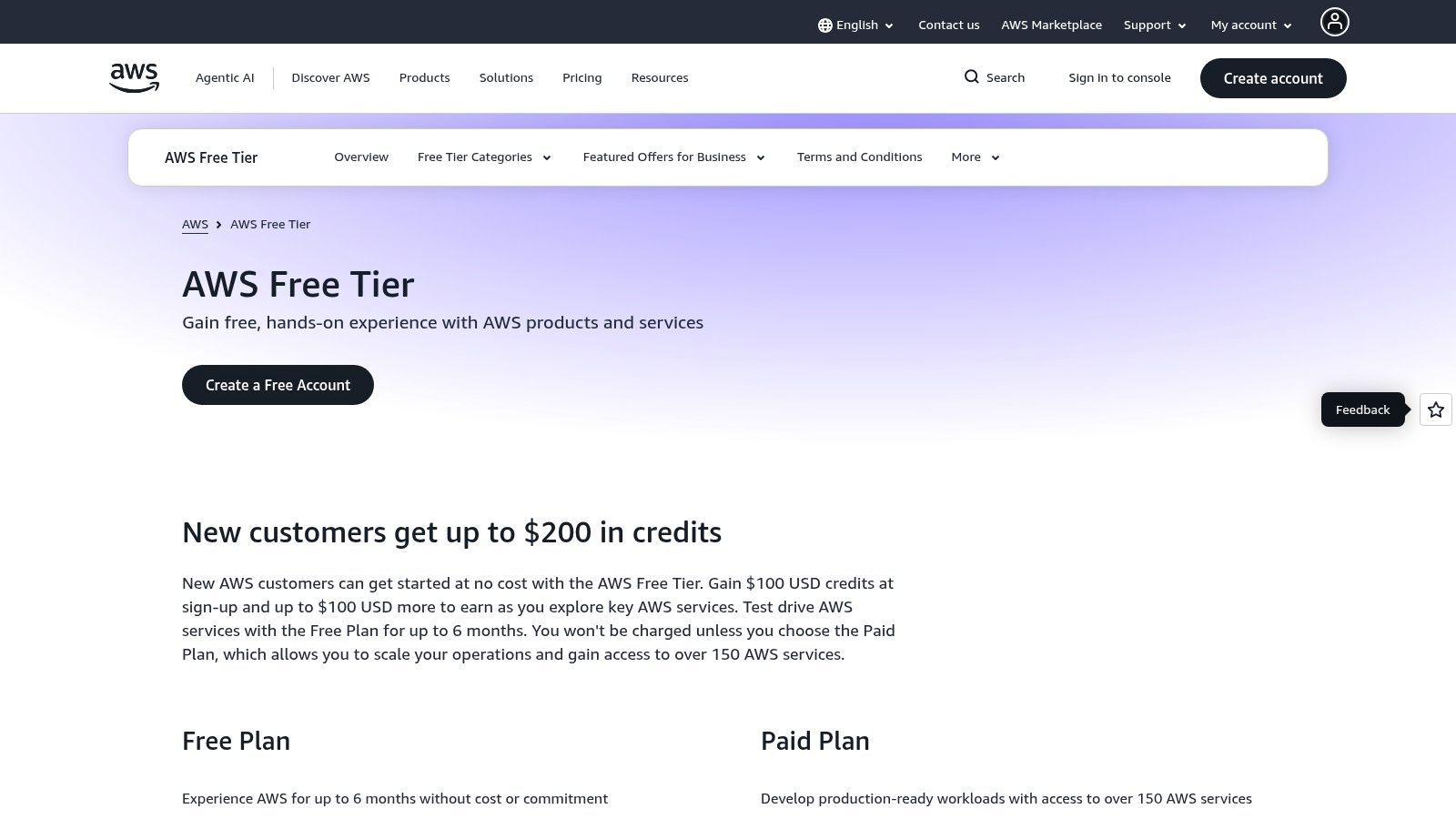

A new AWS account can feel cheap for the first hour and expensive by the end of the week. One test instance runs overnight, a few S3 objects pile up, and a “quick” Bedrock trial turns into real usage. AWS now addresses that with a credit-based Free Tier. New customers can receive up to $200 USD in Free Tier credits under the current program. AWS states that the structure includes $100 at account creation, plus another $100 available through onboarding tasks such as setting budget alerts and enabling MFA.

That model is more practical than the old advice many guides still recycle. Instead of forcing new users to track dozens of separate service quotas from day one, AWS gives you a credit pool and a defined evaluation period. For a first proof of concept, that makes testing much easier.

What makes it useful

The main advantage is flexibility. Credits can cover a broader mix of early experiments, which is better than building around whatever happens to be free in a narrow service-specific allowance. If the goal is to compare Lambda against ECS, test a small database, or run a short internal demo, the program gives enough room to learn before you commit cash.

The catch is that flexibility also makes waste easier.

Teams burn these credits fastest on idle compute, oversized instances, and storage they forget to clean up. The right move is to treat the Free Tier like limited runway. Set budgets on day one. Tag everything. Add automatic stop schedules for non-production resources. If you already know the workload may continue after the credits expire, review how AWS Savings Plans affect your post-credit cost structure before you choose an architecture.

Practical rule: If a resource does not need to be on at night or on weekends, schedule it off before the first test starts.

Best fit and real limits

The AWS Free Tier works best for first-time AWS users, small teams validating an idea, and companies running short proofs of concept. It is not a production funding source. Once the credits are gone, standard charges start unless an always-free allowance still covers part of the usage.

Some always-free offers still continue after the initial credit period, as noted earlier in the article. Those can support lightweight internal tools or low-traffic experiments, but they do not fix weak cost hygiene. If you want credits to last, pair them with automation. CLOUD TOGGLE is useful here because it helps identify idle resources and shut down avoidable spend before the Free Tier disappears. That is the difference between getting a few weeks of random testing and getting a full learning cycle out of the same credit pool.

2. AWS Activate Founders

A founder has a prototype that works, a few customer calls lined up, and no accelerator badge to help with cloud funding. AWS Activate Founders exists for that stage. It gives early teams a formal credit path before they have VC backing or a provider relationship.

For an early product company, the value is not just the credit amount. Founders gives you a chance to test a real build under light business pressure instead of treating AWS as a short-lived sandbox. That changes how the credits should be used. Spend them on decisions that reduce uncertainty, such as whether your app can run on smaller instances, whether managed services save operator time, or whether your architecture will become expensive the moment the credits expire.

Where it helps most

Founders fits teams that are past casual testing and into product development. Good use cases include a working MVP, internal demos for prospects, staging environments, and limited early customer traffic. It can also cover support benefits that matter when a small team needs faster answers and cannot afford long debugging cycles.

A common mistake is for founders to treat these credits as temporary permission to run everything all the time. That burns through startup support fast. Persistent EC2 for dev work, large databases kept running overnight, and unreviewed storage growth will consume credits without teaching you much about the product.

Use Founders as controlled runway. Set spend alerts before provisioning. Tag by environment and owner. Schedule non-production resources off outside working hours. If the workload is likely to stay on AWS after the credits are gone, review how AWS Savings Plans affect post-credit infrastructure costs before you lock in compute patterns.

What to expect in the application process

Approval is usually measured in days, not weeks, but it is still not immediate. Apply before the build reaches a spending spike. Waiting until the account is already under pressure turns a useful credit program into a late reimbursement strategy, which it is not.

Use the official AWS Activate Founders page for current eligibility and submission steps.

- Good fit: Self-funded startups building their own software product

- Weak fit: Service businesses, agencies, or teams without a clear product and company presence

- Best use: MVP development, early validation, and short feedback loops tied to specific technical questions

Founders works best when the team has a short list of things to prove and cost controls in place. Pairing the credits with automation from CLOUD TOGGLE helps stretch that runway by catching idle resources and waste before they drain the balance.

3. AWS Activate Portfolio

A startup gets accepted into a well-known accelerator, receives a large AWS credit allocation, and assumes the runway problem is solved. Three months later, a mix of oversized test environments, idle databases, and unfocused AI experiments has burned through a meaningful share of the balance. That represents a significant Portfolio risk. The credit amount is large enough to help, and large enough to hide bad cost habits.

AWS Activate Portfolio is the strongest mainstream credit path for startups with backing from an approved Activate Provider. In practice, that usually means an accelerator, venture firm, incubator, or startup program already recognized by AWS. The headline benefit is access to a much larger credit pool than Founders, but the qualification bar is higher and the margin for waste gets smaller.

Why Portfolio changes the operating model

Portfolio fits teams that have moved past basic MVP testing and need cloud credits tied to a sharper execution plan. AWS expects more than a company idea and a landing page. Provider backing, clearer product direction, and cleaner company documentation matter because this program is designed for startups with external validation.

That distinction matters on the cost side too.

A small credit grant forces discipline because the limit is obvious. A larger grant can delay hard decisions about architecture, environments, and ownership. Teams keep extra stacks running, overprovision managed services, and postpone cleanup because the bill is not hitting cash yet. Then the credits expire, and the true monthly burn appears all at once.

How to use Portfolio without wasting it

Treat Portfolio credits as planned capital, not free capacity. Start by mapping the credits to specific milestones: ship a production beta, validate a data pipeline, run a bounded AI test, or support a customer launch. If a workload does not help prove a near-term business or technical outcome, it should compete hard for spend.

Set controls before usage ramps:

- Assign owners for every environment, database, and high-cost service

- Tag by product, team, and stage so spend can be reviewed quickly

- Schedule non-production resources off outside working hours

- Review GPU and managed database sizing weekly during active experimentation

- Use CLOUD TOGGLE or equivalent automation to catch idle resources and prevent credits from disappearing into avoidable waste

Portfolio becomes more than a bigger version of Founders. It supports broader execution, but only if the team already knows how to manage shared infrastructure with some discipline.

Practical fit and limitations

Portfolio is a strong fit for venture-backed or accelerator-backed startups building a product with real usage ahead. It is weaker for agencies, consultancies, and teams without a recognized provider relationship. Eligibility also depends on meeting AWS startup criteria noted earlier in the article, so weak documentation or a loose company profile can still stall the process.

The common failure mode is simple. Teams optimize for getting the credits, then fail to manage what happens after approval.

- Best fit: Startups with approved provider backing, a product roadmap, and identifiable infrastructure owners

- Primary constraint: Access depends on the Activate Provider relationship and application quality

- Best use: Scaling beyond MVP, supporting customer growth, and funding targeted experiments with clear limits

- Common mistake: Treating the balance like a general buffer instead of a timed resource with a specific job

Use Portfolio when the company is ready to convert credits into measurable progress. If the team cannot explain where the credits will go, who owns that spend, and what gets turned off when a test ends, the program will cover inefficiency instead of accelerating growth.

4. AWS Nonprofit Credit Program via TechSoup

A nonprofit usually reaches this program at a very practical moment. The team has a donation platform, case management system, public website, or reporting workload running on AWS, and the monthly bill is starting to compete with mission spend. For that situation, TechSoup is one of the more realistic paths to AWS credits because it is built around organizational verification rather than startup growth signals.

The fit is different from Activate. Nonprofits are usually trying to support stable operations on a fixed budget, not fund rapid product expansion. That changes how credits should be used. The best result comes from applying them to known workloads with clear owners, predictable usage, and a plan for what stays on after the credit period ends.

Where it creates real value

This program works best for organizations that already know what AWS is doing for them. A nonprofit with a production website, a donor-facing application, scheduled data processing, or internal tools for program delivery can turn credits into direct budget relief. A nonprofit that is still experimenting without workload discipline can burn through the balance without improving long-term sustainability.

That is the main trade-off. The credit can reduce pressure fast, but it does not fix weak cloud hygiene.

I recommend treating nonprofit credits as a budget extension with controls attached. Set service-level budgets before the credits are applied. Tag environments by program, owner, and lifecycle. Use CLOUD TOGGLE or equivalent cost automation to catch idle compute, unattached storage, and oversized instances early. If the goal is to make credits last longer, the fastest win is usually waste removal, not architecture redesign.

Limits teams should expect

TechSoup verification and AWS program terms can narrow who qualifies and how the credits can be used. Geography, nonprofit status, account setup, and current program rules all matter. Approved credits also may not offset every service the organization wants to run, especially if the environment has grown without much cost review.

SMBs without nonprofit status should not assume this route applies to them. It usually does not.

A practical screening question helps here. If the organization cannot explain which workloads the credits will cover, what usage should be reduced first, and who is responsible for shutting down temporary resources, the credits will act as a short billing pause rather than a cost strategy.

For eligible nonprofits with ongoing AWS operations, this is one of the few credit paths that can support routine infrastructure needs. The value is strongest when finance, operations, and the cloud owner treat the credit as temporary support for a controlled environment, not as permission to postpone cleanup.

5. AWS IMAGINE Grant

A nonprofit may already have donor funding, a capable team, and a clear mission outcome, but still hit a wall on cloud budget. That is the kind of case where the AWS IMAGINE Grant deserves attention. It is a selective program for nonprofits with a defined AWS project, not a general credit pool for routine bills.

The difference matters. This program rewards execution readiness. AWS is evaluating whether the project has a credible plan, internal ownership, and a real reason to run on cloud infrastructure.

Teams should take this path seriously if they can answer three basic questions up front. What workload will run on AWS? Who owns delivery after the grant is awarded? How will the organization measure mission impact once the credits are gone?

Where it fits

The strongest candidates usually have a specific initiative such as a beneficiary platform, data modernization effort, case management improvement, or digital service rollout. Broad statements about innovation are weak. A proposal with named systems, timeline assumptions, and operating owners is stronger.

This also changes how to think about the credit value. The grant can include more than billing relief. For the right project, the bigger advantage is structured AWS involvement tied to a concrete nonprofit outcome.

Trade-offs teams should plan for

The application takes work, and that work is part of the cost. A nonprofit will need time from operations, technical leadership, and program stakeholders to define scope well enough to be credible.

That effort is justified when the project is already real.

It is usually a poor fit for organizations that only want to reduce their next few invoices. If there is no clear workload plan, no one accountable for cloud operations, or no post-grant operating model, the credits can disappear into avoidable waste. In practice, that means idle instances, overprovisioned databases, forgotten snapshots, and test environments that stay online after the pilot ends.

A better approach is to treat the grant like temporary project capital. Build a spend plan before approval. Set tagging rules by project and owner. Put budget alerts in place on day one. Use CLOUD TOGGLE or a similar cost automation tool to catch underused resources early so the grant funds actual delivery instead of background waste.

- Best candidate: A nonprofit with a defined AWS project, clear internal ownership, and a delivery plan

- Poor candidate: An organization seeking broad bill reduction without a scoped initiative

- Key reality: Proposal effort, operating discipline, and post-credit planning all affect whether the grant creates lasting value

Used well, the AWS IMAGINE Grant can fund meaningful nonprofit infrastructure work. Used casually, it becomes an expensive application process followed by a short period of reduced billing.

6. AWS Cloud Credits for Research

A lab needs GPU time for two months to finish a paper. A university team wants to host a workshop dataset and tear it down after the event. Those are research credit cases. They are very different from a startup trying to extend runway or a nonprofit funding an ongoing service.

AWS has a research-focused path for academic and institutional work. It tends to fit bounded projects with a defined objective, a lead investigator or sponsor, and a clear end state. Good examples include experiments, benchmark runs, data processing tied to a study, temporary collaboration environments, and course or training infrastructure with a known timeline.

Why this path works

Research spend is often uneven. One phase may require intensive compute or storage, then usage drops sharply once the analysis, publication, or event is done. Credits are useful in that pattern because they reduce the cost of short, high-intensity periods that are hard to justify through standard department budgets.

That does not make this a substitute for long-term funding.

If the project turns into a standing platform, the cost model changes. At that point, the team needs normal cloud budgeting, ownership, and operating controls instead of treating credits as the plan.

How to use research credits well

The teams that get the most value usually define the workload before the credits arrive. They know which services they expect to use, what the spending ceiling should be, and which resources must be deleted when the work ends. Without that discipline, research environments drift. Expensive instances stay online between experiments, large volumes remain attached, and snapshots accumulate after the useful work is over.

I have seen research projects burn through credits on cleanup failures more often than on legitimate compute demand.

Set tags for project, owner, and end date at the start. Put budget alerts in place immediately. For temporary environments, schedule automatic shutdowns and review unused storage every week during active phases. CLOUD TOGGLE or a similar cost automation tool helps catch idle resources early, which matters even more in research because usage is spiky and easy to overlook between milestones.

Best fit and common failure mode

This program is a strong fit for research teams with a scoped project and an accountable technical owner. It is a weak fit for departments looking to cover general-purpose cloud usage without a defined workload.

The practical rule is simple. Treat research credits as funding for a specific piece of work, not as a blanket discount for ongoing infrastructure.

7. AWS Open Source Credits Program

A common pattern looks like this. The code is free, the user base is real, and the maintainers are paying for build jobs, package distribution, test environments, and public downloads out of pocket. Open source credits can help in that situation, but only when the AWS usage clearly supports the project’s public community operations.

AWS is evaluating whether the cloud spend serves an actual open source project, not whether a company can attach an open source label to private product infrastructure. That distinction matters. Teams that blur it usually waste time on an application that was never a fit.

The strongest candidates tend to have visible adoption, active maintenance, and infrastructure that the community depends on. Good examples include release artifact hosting, mirrors, documentation sites, CI workloads, and public services tied directly to the project. Private staging stacks, internal analytics, or customer-facing SaaS environments are much harder to justify under this program.

This is one of the more flexible credit paths, which is useful and risky at the same time.

Flexible credits let maintainers cover the services that drive project operations. They also disappear quickly if nobody defines spending limits. CI can spike after a busy release cycle. Storage grows when old artifacts and snapshots stay around. Data transfer can become the primary bill if the project distributes large binaries or serves a global user base.

Before applying, map the project’s AWS usage by function and owner. A simple view based on AWS Cost and Usage Reports makes it easier to separate community-serving infrastructure from everything else. That separation helps with the application, and it prevents a common failure mode where credits get spent on mixed workloads that should have had normal budget approval.

Use the credits on work that keeps the project available and healthy. Put tags on every resource. Set budget alerts on day one. Schedule cleanup for temporary runners, test environments, and unattached storage. If the project has uneven usage, CLOUD TOGGLE or a similar automation tool helps catch idle resources before maintainers burn limited credits on infrastructure nobody is using.

Good fit: maintainers funding public project operations with clear community benefit.

Poor fit: commercial teams trying to offset private product costs through an open source wrapper.

8. AWS Migration Acceleration Program credits

A company is halfway through a datacenter exit, the deadline is fixed, and the first cloud invoices are already landing before any legacy infrastructure is shut off. That is the kind of situation MAP credits are built for.

MAP supports migration and modernization work across the usual phases: assessment, mobilization, and workload migration. The value is not just the credits. The benefit is reducing the period where teams pay for old infrastructure and new infrastructure at the same time, while also funding the planning work that prevents expensive rework later.

This program has a higher operational bar than startup or community credit programs. Finance, platform engineering, application owners, and the migration lead need the same view of scope, timing, and exit criteria. Without that, credits disappear into duplicate environments, over-sized landing zones, and migration test stacks that nobody decommissions.

Start with cost visibility before the application goes in. A clean view from AWS Cost and Usage Reports helps separate steady-state cloud spend from temporary migration spend, which is the baseline needed to decide where credits change the business case. This is also where automation matters. CLOUD TOGGLE or a similar tool can catch idle instances, unattached volumes, and after-hours nonproduction environments so the credit pool funds migration progress instead of waste.

The best use case is a business with a defined migration wave, named owners, and a clear plan to turn legacy systems off. The weak use case is a team hoping credits will cover an open-ended cloud experiment with no retirement dates for the old estate.

For companies migrating customer-facing products, cost control is only part of the picture. Growth still depends on demand and discovery, so teams planning the commercial side should also review this practical guide to AI search visibility.

Good fit: organizations with committed migration scope, internal ownership, and realistic cutover milestones.

Poor fit: teams treating MAP as general-purpose free aws credits without a migration program behind it.

9. AWS Generative AI Accelerator

A generative AI startup can burn through credits in weeks if the team treats every model test, retrieval experiment, and inference workflow as production-worthy. The AWS Generative AI Accelerator matters because it can offset part of that spend for a small group of selected companies, while also giving them technical support and startup-facing guidance.

This program works best as a force multiplier, not a base assumption in the budget. Selection is competitive, the cohort format has real expectations, and timing may not line up with a company’s product roadmap. Founders should model their AWS plan so the business still works with Free Tier, Activate, or normal cloud spend controls. If the accelerator comes through, it extends runway. It should not be the only answer.

The practical question is not just whether a startup can get credits. It is whether the team knows how to turn those credits into learning that changes product or revenue decisions.

Where it creates real value

The strongest candidates usually have a narrow generative AI use case, a working product direction, and a clear view of which AWS services are driving cost. That often means teams using Bedrock, SageMaker, vector storage, or evaluation pipelines with defined success criteria.

Used well, accelerator support can fund:

- model evaluation with a fixed test plan

- inference optimization before broader rollout

- security and production hardening for an AI feature already tied to a market need

- short feedback loops between engineering, product, and customer use

Used poorly, it funds noise. I see this pattern often in AI cost reviews. Teams run parallel experiments across too many models, keep every environment alive, and never set a stop rule for failed tests.

A better approach is simple. Assign a credit owner. Put expiration dates on dev environments. Set weekly checkpoints for cost per experiment, latency, and output quality. Use CLOUD TOGGLE or an equivalent cost automation tool to catch idle GPU instances, after-hours notebooks, and forgotten storage before they drain a limited credit pool.

For founders working on distribution as seriously as infrastructure, this broader practical guide to AI search visibility is worth reviewing. Strong unit economics help, but discovery still determines whether usage grows into a business.

Good fit and poor fit

Good fit: startups with a defined generative AI product, measurable technical milestones, and discipline around experiment spend.

Poor fit: teams applying because they want general-purpose free aws credits without a clear workload plan, cost controls, or a path from prototype to production.

10. Generative AI Impact Initiatives

A school district building an AI tutor, a public health team triaging multilingual requests, or a nonprofit summarizing case notes all face the same problem. Model costs can rise before the project proves real public value. Generative AI Impact initiatives are better suited to that kind of work than startup credit programs built around commercial growth.

These offers are aimed at public sector, education, and mission-driven organizations with a defined service outcome. The strongest applications usually tie the AI workload to a measurable operational result, such as faster response times, better access to information, or lower manual review effort. General interest in AI is not enough.

This category also deserves a different credit strategy. The goal is not to spread credits across broad experimentation. The goal is to fund a narrow, high-value use case, set usage limits early, and protect the budget from drift. In practice, that means choosing one production candidate, setting token and inference guardrails, and shutting down side projects that are not tied to the mission case.

Where this is strongest

Generative AI Impact initiatives fit organizations that can show both public benefit and delivery discipline. Educational institutions, public agencies, healthcare-adjacent nonprofits, and similar groups often have a stronger case here than they would in Activate or founder-focused programs.

Partnerships matter too. Some teams pair cloud credits with grants, implementation partners, or outside capital to cover staffing and rollout costs that credits do not pay for. For organizations exploring the funding side, this list of top Generative AI investors in the United States can help with external fundraising research.

What to watch

These initiatives are selective and often tied to a defined application window or campaign. Timing matters. So does scope control.

The common failure mode is treating mission-driven credits like open-ended R&D budget. Teams keep multiple model variants running, retain large datasets they no longer need, and leave development environments active between review cycles. Credits disappear fast under that pattern. A better setup assigns one owner to the credit pool, reviews spend weekly, and uses CLOUD TOGGLE or a similar automation tool to catch idle GPU instances, unused endpoints, and storage growth before they eat into the budget.

If the project is commercial first, this program is usually a poor fit. If the organization can show a clear public outcome, a realistic implementation plan, and cost controls from day one, it can be one of the more strategic AWS credit paths available.

For current public-sector focused details, review the AWS Generative AI Impact Initiative announcement.

Top 10 Free AWS Credits Comparison

| Program | Core Offer | Target Audience | Primary Use Cases | Access & Limits |

|---|---|---|---|---|

| AWS Free Tier (New Credit Based Model) | Up to $200 in promotional credits; coexists with select Always Free offers | New AWS accounts, trialists, POCs | Short-term exploration, labs, testing new services (incl. AI) | Automatic on signup for eligible accounts; one-time pool, expires |

| AWS Activate Founders | Typically ~$1,000 credits + developer support & education | Early-stage founders without accelerator/VC backing | Prototype build, early development and testing | Simple application/verification; modest credit size |

| AWS Activate Portfolio | $10k–$100k+ credits plus support plan offers | Startups backed by approved accelerators/VCs | Larger infra needs, scale-up and pre-production workloads | Requires provider affiliation and documentation; caps apply |

| AWS Nonprofit Credit Program (via TechSoup) | Annual promotional credit allotments via TechSoup | Registered nonprofits (e.g., US 501(c)(3)) | Reduce hosting/operational costs for mission apps and sites | Streamlined nonprofit verification; amounts vary by region |

| AWS IMAGINE Grant | Grants combined with AWS credits, technical mentoring and PR | US-registered nonprofits with high-impact projects | Large-scale, mission-driven cloud & AI initiatives | Competitive selection, detailed proposals and reporting required |

| AWS Cloud Credits for Research | Project-focused credits for research POCs and events | Accredited researchers and academic institutions | Research POCs, benchmarks, training, open science tooling | Formal application and project plan required; time-boxed use |

| AWS Open Source Credits Program | Credits for CI, testing, hosting mirrors and community services | Open source project maintainers and community projects | Sustain OSS infrastructure: CI pipelines, registries, mirrors | Approval-based via application; amounts and terms vary |

| AWS Migration Acceleration Program (MAP) Credits | Credits & funding tied to migration phases (Assess/Mobilize/Migrate) | SMBs and enterprises planning cloud migrations | Offset migration costs, use partner delivery and tooling | Requires approved migration plan and often partner engagement |

| AWS Generative AI Accelerator | Very large credit packages (up to ~$1M), mentorship, GTM support | Elite early-stage generative AI startups | Heavy AI/ML infrastructure, rapid product development | Cohort-based, highly competitive, intensive commitment |

| Generative AI Impact Initiatives | Large credit pools + training & technical enablement for public sector/edu | Public sector orgs, education providers, mission-driven projects | Generative AI pilots, education equity solutions, public good projects | Time-limited windows, narrow eligibility, competitive selection |

From Credits to Sustainable Growth

A team gets approved for AWS credits, spins up fast, and feels covered for a few months. Then the credits burn down faster than expected because staging ran 24/7, old test volumes stayed attached, and nobody priced the post-credit baseline. The credit program was not the problem. The operating model was.

Free AWS credits buy time to learn, migrate, prototype, or launch. They do not replace cost control. Each program in this guide has different limits, timelines, and eligibility rules, but they all create the same requirement: use the credit window to build habits you can afford after the subsidy ends.

A common mistake is confusing credit balance with budget discipline. Founders delay cleanup because the bill is not hitting cash yet. Nonprofits keep temporary environments alive because no one owns shutdown schedules. Migration teams leave duplicate workloads running after cutover. Research groups finish the experiment and forget the infrastructure around it. Credits hide waste for a while. They do not fix it.

That matters even more under the newer Free Tier model. As AWS noted earlier in the article, new accounts now face a shorter credit-based evaluation window than the older service-by-service approach. In practice, that reduces the margin for idle compute, overprovisioned databases, and forgotten storage.

Native AWS tools are useful for visibility. They help teams see consumption, thresholds, and upcoming exposure. They do not automatically stop low-value runtime across dev, QA, staging, demos, and one-off experiments. If the goal is to stretch credits, prevention matters more than reporting.

The practical framework is straightforward:

- Pick the right credit lane: A bootstrapped founder, portfolio-backed startup, nonprofit, researcher, open source maintainer, public sector applicant, and migration program owner should not use the same playbook.

- Model real spend early: Estimate what the environment costs with credits and what it costs after they expire. That changes architecture decisions.

- Protect the cheap wins: Small always-free or low-cost allowances disappear quickly when oversized instances, snapshots, data transfer, or idle databases pile on top.

- Automate shutdowns: Any environment that does not need nights or weekends should have schedules, not reminders.

- Set an exit plan before day one: Define who owns the paid-state budget, what gets downsized, and which workloads get turned off if the credit period ends before the project proves value.

SMBs often need this discipline most. Many for-profit small businesses will not qualify for the largest grant-style programs in this list, so the practical path is usually a smaller credit pool plus tighter operating controls. That is less exciting than chasing a large award, but it is usually more realistic.

If you want credits to last longer, CLOUD TOGGLE helps by automatically powering off idle servers and virtual machines on schedules your team controls, without handing full cloud-console access to every stakeholder. That is useful during a credit-funded trial, and it stays useful after the credits are gone.

Used well, free AWS credits fund learning, migration progress, and product validation. Used casually, they delay a cost problem by a few months. The difference comes from budgeting, tagging, ownership, and automation.