Your team launches in AWS or Azure because it’s fast. A few months later, the invoices stop looking like startup-friendly utility bills and start looking like infrastructure debt. Nobody made one catastrophic choice. A dozen small ones piled up. Test servers stayed on overnight. Data moved between services more than expected. “Temporary” environments became permanent. The result is the same: the cloud public vs private debate stops being theoretical the moment your monthly spend outgrows your assumptions.

That’s why this decision belongs with the CTO, not just the platform team. It affects cash flow, delivery speed, audit posture, procurement, and how much operational pain your engineers absorb every week.

The wrong question is “Which cloud model is better?” The right question is “Which workloads belong where, and what does that choice really cost to run?”

| Decision area | Public cloud | Private cloud | Hybrid cloud |

|---|---|---|---|

| Best fit | Variable demand, fast experimentation, managed services | Steady workloads, tighter control, custom compliance needs | Mixed workload estates with different cost and security needs |

| Cost pattern | Low entry barrier, variable monthly spend, hidden usage fees can accumulate | Higher setup commitment, more predictable run-rate when utilization is stable | Split model. Lower baseline costs for some workloads, flexibility for peaks |

| Operations | Faster provisioning, less hardware ownership | More responsibility for capacity, lifecycle, and platform operations | Highest coordination overhead, but strongest placement flexibility |

| Security model | Shared responsibility with provider | Full control and full responsibility | Policy-heavy. Security depends on consistent controls across both sides |

| Performance model | Elastic, but can vary by tenancy and service behavior | Predictable on dedicated infrastructure | Put predictable workloads private, bursty workloads public |

The Billion Dollar Question Your Business Must Answer

Most businesses don’t revisit their cloud strategy until finance asks why infrastructure spend jumped again. By then, the architecture has already hardened around convenience. Reversing that later is expensive, politically awkward, and technically messy.

That timing matters because public cloud demand is still growing fast. In 2025, global public cloud spending is projected to reach $723.4 billion, a 21.5% increase from 2024, driven by AI infrastructure and generative AI services, according to cloud spending projections collected by Keywords Everywhere. More companies are buying cloud, but that doesn’t mean more companies are buying it efficiently.

What this decision really controls

This isn’t a branding choice between AWS, Azure, or “on-prem.” It’s a choice about where you want to pay for flexibility and where you want to pay for control.

Three questions decide most outcomes:

- How variable is demand? If your workload swings hard by hour, day, or season, public cloud often earns its keep.

- How expensive is idle capacity? If environments sit on all day waiting for occasional use, you’re paying for convenience more than compute.

- How much control do auditors, customers, or regulators expect? Some teams can work within provider guardrails. Others need tighter boundaries.

Practical rule: If your bill keeps rising while user demand stays relatively stable, you probably don’t have a cloud problem. You have a placement and governance problem.

Why SMBs feel this faster

Large enterprises can bury waste inside a bigger budget for a while. SMBs can’t. One oversized environment, one badly scoped backup pattern, or one fleet of forgotten development VMs can distort the entire infrastructure budget.

That’s why cloud public vs private works best as a workload placement exercise, not a belief system. Public cloud buys speed. Private cloud buys predictability. Hybrid buys optionality, but only if you manage it deliberately.

Public vs Private Cloud Fundamentals

Public cloud is rented infrastructure operated by a provider such as AWS, Azure, or Google Cloud. You consume shared infrastructure on demand, usually with pay-as-you-go pricing, and the provider manages the underlying facilities and hardware.

Private cloud is dedicated infrastructure for one organization. It can live in your own data center or in a hosted environment, but the key point is isolation and control. You decide more of the architecture, operations, and policy surface.

A simple way to think about it

Public cloud is like renting a fully serviced apartment. You can move in quickly, add space when you need it, and avoid dealing with the building itself. But you live within the landlord’s rules, pricing model, and building design.

Private cloud is like owning a custom house. You get dedicated space, tighter control, and the ability to shape the environment around your needs. You also own the maintenance decisions, the upgrade path, and the consequences of bad planning.

The operational difference that matters

The technical definitions are easy. The operational implications are what usually get missed.

With public cloud, you get:

- Fast provisioning for environments, experiments, and product launches

- Elastic scaling when traffic or compute demand changes quickly

- Managed services that reduce platform work for databases, messaging, analytics, and AI

- Usage-based billing that feels efficient early on, but becomes harder to forecast later

With private cloud, you get:

- Dedicated resources for more stable performance behavior

- Custom security boundaries that are easier to align with specific internal policies

- More cost predictability when workloads are steady and capacity is well utilized

- More platform responsibility for operations, upgrades, capacity planning, and failure handling

Public cloud removes a lot of hardware pain. It doesn’t remove architecture discipline.

What people often confuse

A private cloud isn’t just “old on-prem with a nicer name.” If it’s designed well, it includes automation, virtualization, self-service, policy control, and repeatable operations.

A public cloud isn’t automatically cheap because it has no upfront hardware purchase. It’s just easier to start.

That distinction matters because many teams choose public cloud for the right reason, speed, and then keep every workload there for the wrong reason, inertia.

The Ultimate Cost Showdown Beyond CapEx and OpEx

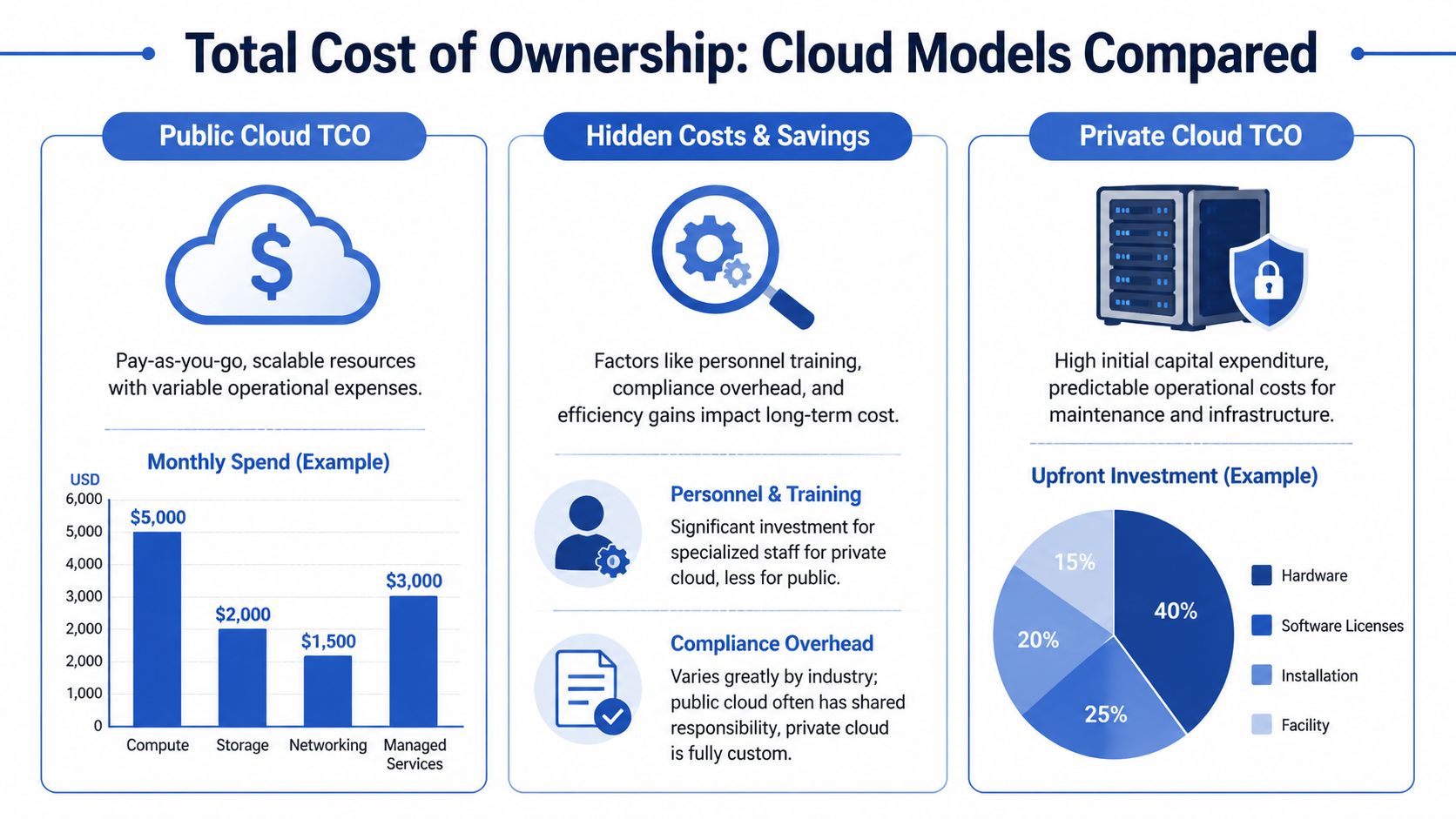

Most cloud cost conversations are too shallow to be useful. People reduce the whole issue to CapEx versus OpEx, then act surprised when the monthly bill says something else. In practice, the fight is between visible cost and operationally invisible cost.

Public cloud looks clean on a slide deck because there’s no hardware purchase order up front. That doesn’t mean it’s cheaper. It means the complexity moves into the bill.

Where public cloud bills become hard to trust

The first layer is obvious: compute, storage, and networking. The second layer is where many SMBs and mid-market teams get hit. Data egress, snapshot growth, API-driven service usage, premium support, and duplicated environments all stack on top of the base bill.

Idle compute is the most common offender because it rarely feels urgent. A development server that stays online overnight doesn’t trigger an outage. It just turns convenience into recurring spend.

FinOps maturity is a critical factor. Teams that actively schedule non-production resources, rightsize instances, and review usage patterns tend to keep public cloud cost under control. Teams that rely on “we’ll clean it up later” usually don’t.

For teams trying to get more disciplined, the PushOps platform for cost control is a useful overview of how cloud cost optimization works in operations, especially around waste detection and policy-based controls.

The tipping point is real

At small scale, public cloud often wins because speed and flexibility matter more than absolute efficiency. At sustained scale, the equation changes.

A concrete benchmark makes the point. For a medium deployment of 500 VMs, public cloud costs average around $28,925 per month, compared to $7,375 for a self-managed private cloud, which can mean more than $250,000 in annual savings, based on hosting cost comparisons published by Black Box Hosting.

That doesn’t mean every 500-VM deployment should move tomorrow. It means the burden of proof changes. Once you reach that level of stable demand, staying fully public requires a stronger economic argument than is commonly anticipated.

What SMBs usually miss in TCO models

A serious total cost model has to include more than vendor list pricing.

Consider these cost buckets:

Idle environments

Non-production workloads often run longer than their business value justifies. This is usually the easiest waste to eliminate.Data movement

Architects focus on where applications run. Finance eventually notices where data flows.Management overhead

Public cloud reduces hardware work, but it doesn’t eliminate cost governance work. Someone still has to own lifecycle, scheduling, cleanup, and accountability.Private cloud staffing assumptions

Some teams overestimate the people cost of private cloud and underestimate the governance cost of sprawling public estates.

If a workload is steady, boring, and always on, public cloud’s convenience premium can become expensive fast.

A practical way to evaluate TCO

Don’t model “the cloud” as one block. Split your estate into workload groups and evaluate each one separately.

| Workload type | Common reality | Usually better starting point |

|---|---|---|

| Dev and test | Often idle outside work hours | Public cloud with strict scheduling and cleanup |

| Customer-facing bursty apps | Demand changes unpredictably | Public cloud |

| Stable internal services | Runs continuously with known demand | Private cloud or hosted private |

| Sensitive systems with fixed patterns | Needs control and predictability | Private cloud |

| Mixed estates | Some spiky, some steady | Hybrid |

One of the better habits here is to review cloud costs through workload behavior, not account structure. Finance sees line items. Platform teams need to see patterns. A useful companion read on that point is this breakdown of the real cost of cloud infrastructure.

What works and what doesn’t

What works:

- aggressive shutdown schedules for non-production resources

- rightsizing tied to actual usage, not developer guesses

- moving stable, long-running workloads into environments with more predictable economics

- reviewing data transfer architecture before it becomes a billing surprise

What doesn’t:

- assuming reserved pricing alone solves waste

- treating every internal tool like a production-critical service

- postponing cleanup until the next quarterly review

- comparing public and private cloud using only procurement language

The best cost decisions in cloud public vs private aren’t ideological. They come from admitting that idle compute has a price, and somebody is already paying it.

Evaluating Security and Compliance Tradeoffs

A CTO signs off on a cloud migration to simplify operations. Six months later, the team is spending more time on access reviews, exception handling, audit evidence, and incident response runbooks than before. That is the security question in cloud public vs private. Which model reduces risk for this workload with the team you have.

Public cloud and private cloud can both be secure. They fail in different ways, and they create different operating burdens. That matters for TCO because security work is not abstract overhead. It is headcount, tooling, review cycles, and a lot of time spent keeping low-value systems compliant long after business hours.

Public cloud reduces infrastructure burden, not accountability

AWS, Azure, and Google Cloud secure the facilities, hardware, and large parts of the control plane. Your team still owns identity design, network exposure, encryption choices, secret storage, logging, detection, backup policy, and recovery testing.

That division works well for teams with strong platform discipline. It breaks down fast when cloud resources spread faster than governance. The common failure modes are familiar:

- IAM roles with far more access than the application needs

- test systems exposed to the internet and forgotten

- inconsistent guardrails across accounts, subscriptions, or projects

- logs collected but not reviewed

- managed services adopted without clear data classification rules

A practical summary of those day-to-day risks is this guide to common security challenges in cloud computing.

Private cloud gives tighter control, and more operational work

Private cloud is attractive when the business needs stricter isolation, custom controls, specific residency boundaries, or a simpler audit narrative. Those are valid reasons. But the control only has value if the team can operate it well every week, not just during the design review.

In private environments, you own patch cadence, hypervisor hardening, segmentation, monitoring coverage, firmware lifecycle, privileged access paths, and incident response end to end. You also own capacity kept online for resilience, even when utilization is low. For SMBs and lean DevOps teams, that idle but protected capacity changes the TCO calculation more than many cloud comparisons admit.

Control is a security feature only when operations can sustain it.

Why some teams pull workloads back

Workload repatriation often gets framed as a pure cost story. In practice, security and compliance are usually part of the decision. Some teams move specific systems back because they want narrower trust boundaries, more predictable data handling, or fewer shared platform abstractions in scope for audits. Industry analysis from IDC on cloud repatriation trends points to security, governance, and cost control as recurring reasons organizations reassess where workloads belong.

That does not mean private cloud is safer by default. It means some workloads become easier to defend, explain, and certify when the environment is more constrained.

Compliance is usually a fit problem

Auditors do not award points for ideology. They ask whether controls are enforced, evidence is available, and responsibilities are clear.

Public cloud is often a strong fit for standard business systems when the architecture is clean and policy enforcement is consistent. Private cloud is often easier to justify for systems with unusual control requirements, customer-specific handling rules, or environments where a dedicated boundary shortens every audit conversation.

| Requirement | Public cloud | Private cloud |

|---|---|---|

| Standard business apps | Usually practical with strong configuration discipline | Also viable, but often more work than needed |

| Strict residency demands | Possible, but design constraints matter | Often easier to align with custom requirements |

| Highly customized security controls | Limited by provider models and service boundaries | Easier to tailor |

| Team with strong cloud security practice | Very workable | Also workable |

| Team needing simple isolation narrative for audits | Can be harder to explain | Often easier to demonstrate |

The mistake is choosing public or private first, then forcing every workload into that answer. The better approach is to map sensitivity, evidence requirements, and operating capacity workload by workload. That usually produces a mixed answer, and it usually saves money by avoiding private infrastructure for systems that do not need private-grade control.

Comparing Performance Scalability and Predictability

Performance discussions often mix together three different needs: raw speed, elasticity, and consistency. They’re related, but they’re not the same thing. Public cloud is excellent at rapid scaling. Private cloud is often stronger at predictable behavior over time.

That distinction matters because many application teams don’t need maximum elasticity every minute. They need stable response times, known resource behavior, and fewer surprises under load.

When public cloud performs best

Public cloud shines when demand changes quickly or unpredictably. E-commerce peaks, campaign traffic, batch jobs, temporary environments, and short-lived engineering experiments all benefit from infrastructure you can provision quickly and release just as quickly.

That flexibility also helps product teams move faster. If you need a new environment this afternoon, public cloud is hard to beat.

But there’s a tradeoff. Shared infrastructure and service-layer abstractions can introduce variability. You might not notice it in general business applications. You’ll notice it fast in latency-sensitive systems, real-time processing, tightly tuned databases, or workloads with hard performance expectations.

When private cloud earns its keep

Private infrastructure is usually more interesting for workloads that are steady and performance-sensitive. Dedicated resources reduce contention. Platform teams can tune storage, networking, and compute placement around the application rather than around a provider’s default abstractions.

That’s why stable workloads often feel calmer in private environments. Engineers spend less time chasing intermittent behavior caused by tenancy effects or service throttling patterns they don’t directly control.

A measurable version of that tradeoff exists. Private cloud environments can show 22-31% less performance variation for mission-critical workloads, avoiding the multi-tenant noisy-neighbor issues and throttling behavior sometimes seen in public cloud, according to performance comparison benchmarks from Hykell.

The right performance question isn’t “Which is faster?” It’s “Do we need elasticity or consistency more often?”

A workload-first view

Here’s the practical split I use with leadership teams:

- Choose public cloud first when the workload is bursty, uncertain, or tied to fast experimentation.

- Choose private cloud first when the workload is stable, long-running, and sensitive to latency variation.

- Choose hybrid placement when the base load is predictable but peak demand isn’t.

The hidden cost of unpredictability

Performance variance becomes expensive long before it becomes a board-level incident. Developers add retries. SREs overprovision. Database teams add bigger instance classes “just in case.” None of that looks like a performance problem on paper. It shows up as extra spend and operational friction.

Private cloud can reduce that pressure when the application profile is right. Public cloud can avoid overbuilding when the traffic profile is uncertain. Both are valid. Problems start when teams force a steady workload into an elasticity-first platform, or a fast-changing workload into a capacity-constrained one.

What CTOs should ask the team

Instead of asking whether the application is “critical,” ask:

- Does demand change materially through the week?

- Do users care about consistent latency more than headline scale?

- Are engineers compensating for infrastructure behavior in code or overprovisioning?

- Would capacity planning be easier than constant spend tuning?

If the answers cluster around consistency, private starts looking stronger. If they cluster around volatility and speed, public usually wins.

Embracing the Hybrid Cloud Compromise

A common CTO scenario looks like this. The core application runs at 55 to 65 percent utilization most of the month, then traffic jumps for three days after a product launch or billing cycle. Keeping all of that capacity in private infrastructure means paying for idle headroom. Keeping all of it in public cloud can turn short bursts into a surprisingly large monthly bill. Hybrid exists for this gap.

Hybrid cloud works when workload placement follows operating reality, not politics. It gives teams a way to keep stable demand on infrastructure they can size and control, then use public cloud where elasticity, managed services, or geographic reach justify the premium. That can improve TCO, but only if the split is deliberate and the team can operate both sides well.

Where hybrid makes economic sense

The strongest hybrid cases usually have one thing in common. They separate always-on capacity from occasional demand.

A few patterns show up repeatedly:

Baseline private, burst public

Keep the steady-state workload on infrastructure sized for normal demand. Push temporary spikes into public cloud instead of funding idle servers year-round.Sensitive systems private, customer-facing services public

Put regulated data stores or tightly controlled internal systems in a private environment. Run the web tier, APIs, or mobile back ends in public cloud where scale and managed services help.Private primary, public recovery

Use public cloud as the recovery target instead of building a second private site that sits mostly unused.

That last point matters more than many SMBs expect. Disaster recovery capacity is often idle compute with a nice name attached to it. Hybrid can cut that waste, but only if failover design, replication cost, and testing discipline are accounted for. A useful reference is this guide to hybrid cloud architecture patterns.

Where hybrid gets expensive fast

Hybrid is not a middle ground that automatically lowers risk or cost. It often adds a second control plane, a second monitoring model, and a second set of failure modes.

The main failure pattern is operational sprawl:

- inconsistent IAM and policy models

- poor cost visibility across environments

- networking designed per platform instead of end to end

- application dependencies that block realistic portability

- teams staffed to run one environment well, but not two

I have seen companies save money on compute placement and give it all back in integration work, duplicated tooling, and after-hours troubleshooting. If the team has to maintain separate runbooks, security reviews, and deployment logic for each side, hybrid starts behaving like two platforms with a tax on top.

Security has to work as one system

Hybrid security breaks down when access, segmentation, and logging are handled differently in each environment. Auditors notice. Incident responders notice faster.

The practical goal is one policy model, one identity strategy, and one clear path for tracing traffic and changes across both sides. Teams working through that problem should start with securing hybrid cloud infrastructure, because the hard part is rarely a single vendor feature. It is getting network controls, identity, and monitoring to behave consistently under pressure.

After the architecture is in place, visibility matters just as much as design. This short explainer is worth watching if your team is trying to align infrastructure placement with operational tradeoffs:

The practical reason CTOs choose hybrid

CTOs usually choose hybrid for selective optimization, not ideology. They want to stop paying public cloud rates for predictable, always-on workloads without giving up the speed and flexibility that public cloud is good at.

| Workload pattern | Better hybrid approach |

|---|---|

| Stable core app with occasional spikes | Keep the base load private. Use public cloud for short demand surges |

| Regulated data with internet-facing UX | Keep data services private. Run web and app tiers publicly where it fits |

| Legacy estate plus new cloud-native apps | Keep existing private investments. Build new services in public cloud |

| Pressure to cut cloud bills without slowing delivery | Move long-running predictable services off variable billing. Keep burst and experimentation public |

Hybrid is harder to run than a single model. For the right workload mix, it is still the most financially rational option. The deciding factor is not whether both clouds are available. It is whether the team can place steady demand, burst demand, and idle recovery capacity where each one costs the least to operate.

A Decision Checklist for SMBs and DevOps

If you’re an SMB, a lean platform team, or a CTO trying to stop cloud spend from drifting upward, the best decision framework is brutally practical. Don’t ask which model is fashionable. Ask which model your workloads, team, and budget can operate well.

Start with workload behavior

A workload’s shape tells you more than the vendor’s sales deck ever will.

Use these checks:

- Choose public cloud when the workload has uncertain demand, fast product iteration, short-lived environments, or clear benefit from managed services.

- Lean private when the workload is long-running, utilization is stable, and performance behavior matters more than rapid elasticity.

- Use hybrid when the workload has a predictable base but occasional bursts, or when data and application tiers have different security requirements.

If your team can’t clearly describe a workload’s daily and weekly usage pattern, pause the migration discussion. You’re still guessing.

Check where idle compute is hiding

This is the most ignored line item in many SMB cloud estates. Teams obsess over instance family selection, then leave environments running around the clock that nobody touches after business hours.

Look for:

- development servers left on overnight

- QA environments that run on weekends with no users

- internal tools treated like revenue-generating production systems

- old proof-of-concept instances that nobody officially owns

The technical fix is rarely hard. The governance fix is harder. Someone has to define schedules, exceptions, and who can override them safely.

Match the model to your budget reality

Public cloud is often easier to start and harder to forecast. Private cloud is harder to stand up and often easier to model once utilization settles down.

A useful budget lens is this:

| Budget question | If yes | Likely direction |

|---|---|---|

| Do you need to avoid upfront commitment? | Cash preservation matters more than long-term efficiency | Public cloud |

| Are bills volatile and hard to explain month to month? | Finance wants predictability | Private or hybrid |

| Are workloads stable enough to justify dedicated capacity? | Spend is recurring and steady | Private |

| Do you need flexibility while controlling a stable base load? | Mixed demand profile | Hybrid |

Be honest about team capability

Many cloud strategies go wrong because teams choose the environment they wish they could operate, not the one they can operate consistently.

Ask directly:

- Does the team understand IAM, networking, and cost controls in AWS or Azure well enough to avoid drift?

- Can the team run private infrastructure without heroics?

- Do you have clear ownership for patching, backups, monitoring, and capacity planning?

- Will developers self-serve responsibly, or does every convenience become permanent spend?

The cheapest architecture on paper becomes expensive fast if your operating model depends on tribal knowledge.

Security and compliance should narrow the field early

If legal, customer contracts, or internal governance require tighter control boundaries, don’t wait until the end of the evaluation to admit it. That requirement should shape placement from the start.

A simple rule helps here:

- Public-first if standard controls and strong cloud governance are enough.

- Private-first if the environment needs custom policy enforcement, tighter residency narratives, or stronger infrastructure isolation.

- Hybrid-first if only part of the system carries the strict requirement.

What usually works for SMBs

For most SMBs and mid-market teams, the best answer isn’t “move everything private” or “stay all in on hyperscalers forever.”

What usually works is:

- keep customer-facing bursty workloads in public cloud

- keep development flexible, but enforce strict uptime schedules

- evaluate steady internal or high-baseline systems for private placement

- use hybrid where data sensitivity and traffic patterns differ

- review spend by workload category, not just by account or subscription

What usually fails

These patterns tend to create regret:

- choosing public cloud because it’s easy, then never revisiting placement

- moving to private cloud without enough operational discipline

- calling an environment “hybrid” when it’s really two disconnected platforms

- leaving cost optimization to engineering goodwill instead of policy

- assuming production standards apply to every non-production VM forever

The final decision test

If you want a fast executive filter, use this:

Pick public cloud when speed, elasticity, and service breadth matter most, and you’re prepared to actively govern cost.

Pick private cloud when workloads are steady, controls need to be tighter, and your team can operate the platform responsibly.

Pick hybrid when neither extreme fits, and you’re willing to enforce one operating model across both sides.

The biggest mistake is thinking the decision ends after migration. It doesn’t. No matter which model you choose, active cost management is part of the architecture. Idle compute, weak ownership, and sloppy schedules will distort TCO in public, private, and hybrid environments alike.

That’s the part many teams learn too late. Cloud efficiency isn’t something you buy once. It’s something you operate.

If you want a simpler way to cut waste without handing out full cloud-console access, CLOUD TOGGLE helps teams automatically power off idle AWS and Azure servers on a schedule, apply role-based access, and keep cloud spend under control without building custom scripts.