Automating when your AWS instances start and stop is a straightforward way to slash your cloud bill. You can accomplish this with native tools like the AWS Instance Scheduler or a custom setup using EventBridge and Lambda. The basic idea is to create a schedule and tag your EC2 or RDS instances, telling them when to run and when to rest. This simple change ensures you're only paying for resources when you actually need them.

Why You Should Schedule Your AWS Instances

Leaving cloud resources running 24/7 is like leaving the lights on in an empty office building, it's a surefire way to waste money. This is especially true for non-production environments.

Think about your development or testing servers. Most of them sit completely idle every night and all weekend. Yet, you're still footing the bill for every single second they're online.

For a typical 9-to-5 work week, that server is likely doing absolutely nothing for over 120 hours. That's more than 70% of the week you're paying for with zero return. Scheduling flips this script, turning that waste directly into savings.

The Business Case for Automation

Automating your instance start and stop times isn't just a clever trick; it's a core pillar of good cloud financial management. It shifts your compute costs from a fixed, predictable expense to a variable one that truly reflects your team's activity. The impact on your bottom line can be massive.

By turning off resources when they are not in use, you align your cloud spending directly with your operational needs. This isn't just about saving money; it's about operating with greater efficiency and financial discipline.

For many teams, the official AWS Instance Scheduler solution is a great place to start. Businesses using it have reported slashing their compute costs by up to 65% in dev, test, and staging environments. That's a huge win.

Cost Savings from Scheduling a t3.large Instance

Let's look at a quick, real-world example. We'll take a single t3.large EC2 instance, a common choice for development work, and see what happens when we schedule it to run only during business hours instead of 24/7.

| Metric | Running 24/7 (730 hours/month) | Scheduled (172 hours/month) | Monthly Savings |

|---|---|---|---|

| Hourly On-Demand Rate (us-east-1) | $0.0832 | $0.0832 | |

| Total Monthly Cost | $60.74 | $14.31 | $46.43 |

| Cost Reduction | 76.4% |

As you can see, even for just one instance, the savings are substantial. Scheduling this single server saves over $46 every month. Now, imagine scaling that across dozens or even hundreds of non-production instances. The numbers add up incredibly fast.

Beyond Cost Reduction

While saving money is the main event, scheduling brings other benefits to the table. It cultivates a more disciplined mindset around resource management.

It can also subtly improve your security posture by reducing the attack surface of non-essential instances during off-hours. Fewer running machines mean fewer potential entry points.

The core idea is simple: stop paying for idle capacity. As you can see when you explore the hidden cost of idle VMs, there's a ton of potential savings just waiting to be unlocked. Implementing an AWS schedule instance plan is one of the highest-impact, lowest-effort optimizations you can make in your cloud environment.

If you're comfortable getting your hands dirty and want fine-grained control over your instance schedules, building a custom scheduler with Amazon EventBridge and AWS Lambda is a fantastic serverless approach. This method gives you total authority over the automation logic without the baggage of deploying a pre-packaged solution.

Essentially, you're building your own custom tool that fits your environment perfectly.

In this setup, EventBridge is the timekeeper. It's a serverless event bus that can kick off actions based on a schedule, much like a classic cron job. You just tell it when to run. Lambda provides the muscle, containing the code that does the actual work of starting or stopping your EC2 instances.

Crafting the Lambda Function

The heart of this operation is your Lambda function. The goal here is simple: write a script that looks for EC2 instances with a specific tag and then performs an action, either start_instances or stop_instances. Using tags is the secret sauce because it lets you dynamically control which machines are included in the schedule without ever having to edit your code.

For instance, you could decide on a tag key like Auto-Schedule with a value of true. Your function will then hunt for all instances carrying that exact tag.

Here's a straightforward Python snippet using the Boto3 library that stops any tagged instances. You can easily flip this to start instances just by changing the API call.

import boto3

def stop_tagged_instances(event, context):

ec2 = boto3.client('ec2')

# Define the tag to look for

filters = [

{

'Name': 'tag:Auto-Schedule',

'Values': ['true']

},

{

'Name': 'instance-state-name',

'Values': ['running']

}

]

# Find instances with the specified tag that are currently running

instances = ec2.describe_instances(Filters=filters)

instance_ids_to_stop = []

for reservation in instances['Reservations']:

for instance in reservation['Instances']:

instance_ids_to_stop.append(instance['InstanceId'])

if instance_ids_to_stop:

ec2.stop_instances(InstanceIds=instance_ids_to_stop)

print(f"Stopped instances: {', '.join(instance_ids_to_stop)}")

else:

print("No running instances with the 'Auto-Schedule' tag found.")

Securing Your Function with an IAM Role

By design, your Lambda function can't just talk to other AWS services, it needs permission. This is where an IAM (Identity and Access Management) role comes into play. It’s absolutely critical to follow the principle of least privilege, giving the function only the permissions it needs to do its job.

Never grant your Lambda function broad permissions like

ec2:*. A tightly scoped policy drastically reduces your security risk by making sure the function can only perform its intended actions and nothing else.

For an AWS schedule instance function, it needs permissions to describe, start, and stop instances. You'll also need basic CloudWatch Logs permissions so it can write logs for troubleshooting.

Here’s a ready-to-use JSON policy for the IAM role. It’s secure and provides just enough access for this specific task.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"ec2:StartInstances",

"ec2:StopInstances",

"ec2:DescribeInstances"

],

"Resource": ""

},

{

"Effect": "Allow",

"Action": [

"logs:CreateLogGroup",

"logs:CreateLogStream",

"logs:PutLogEvents"

],

"Resource": "arn:aws:logs:::"

}

]}

Setting the Schedule with EventBridge

With your Lambda function and IAM role good to go, the final piece is telling EventBridge when to fire it off. The best way to do this is with two separate rules: one for stopping instances and another for starting them.

- Stop Instances Rule: Create a rule that runs on a schedule. A cron expression like

cron(0 18 ? * MON-FRI *)would trigger your "stop" function every weekday at 6 PM UTC. - Start Instances Rule: Create a second rule with a schedule like

cron(0 8 ? * MON-FRI *)to trigger your "start" function every weekday morning at 8 AM UTC.

Each rule gets configured to target your specific Lambda function. Once you enable them, EventBridge will automatically invoke your code at the specified times. You've now got a completely serverless and highly cost-effective way to aws schedule instance operations.

When you need something more heavy-duty than a simple script for your aws schedule instance tasks, especially across larger environments, the official AWS Instance Scheduler solution is the way to go. This isn't a manual build; it's a pre-packaged, "batteries-included" solution you deploy straight from the AWS Solutions Library using a CloudFormation template.

The template does all the hard work for you. It automatically spins up a DynamoDB table to hold your schedules, a Lambda function with all the scheduling logic, and the CloudWatch triggers needed to run that function automatically. It's a seriously powerful setup right out of the box.

Deploying the CloudFormation Stack

Getting started is as simple as launching the solution's CloudFormation stack. You'll find it in the AWS Solutions Library, and the deployment is a pretty straightforward wizard. You'll just need to plug in a few key details during the setup process.

- Stack Name: Give your deployment a memorable name, like

Dev-Instance-Scheduler, so you can easily spot it later. - Default Timezone: You'll need to set a primary timezone for your schedules, like

UTCorAmerica/Los_Angeles, to make sure everything kicks off at the right local time. - Services to Schedule: This is a nice touch. You can choose to schedule just EC2 instances, just RDS instances, or both. It’s pretty versatile.

Once you’ve locked in the settings, CloudFormation takes the wheel and builds out all the resources. Just sit back and wait for the status to flip to CREATE_COMPLETE.

Defining and Applying Schedules with Tags

Here's where the real magic of the AWS Instance Scheduler solution comes in: it's all driven by resource tags. Instead of hardcoding instance IDs into a script, you define schedules in one central place and then "assign" them to your instances with a simple tag. It's a much cleaner way to manage things.

First, you'll define your schedules inside the DynamoDB table that the stack created for you. You might create a schedule called office-hours-pst, for example. This entry would tell AWS that instances should run from 9 AM to 5 PM, Monday through Friday, in the PST timezone.

Then, you just apply that schedule to any EC2 or RDS instance you want. All you have to do is add a tag to the resource with a specific key (it defaults to Schedule) and the name of your schedule as the value.

Example Tag:

- Key:

Schedule- Value:

office-hours-pst

The Lambda function runs on a timer (every five minutes by default). It scans your account for any instances with that Schedule tag, looks up the details for office-hours-pst in DynamoDB, and then starts or stops the instance if it's time. This tag-based architecture is perfect for managing schedules across dozens or even hundreds of instances without losing your mind.

For a deeper look into what this solution offers, check out our guide on the AWS Instance Scheduler for more details.

This method also ties into operational reliability. Getting proactive communication from AWS about scheduled events, like instance stops or reboots, is absolutely critical for keeping your cloud infrastructure running smoothly. In fact, a survey of AWS customers found that 80% said these notifications helped them head off unplanned downtime and stick to their service level agreements. You can learn more about how AWS handles scheduled events directly from their documentation.

Choosing the Right Scheduling Method for You

So, how should you actually schedule AWS instances? You're basically looking at two different paths. On one side, you've got the lean, build-it-yourself solution using EventBridge and Lambda. On the other, you have the beefier, pre-packaged AWS Instance Scheduler solution.

Honestly, the right choice really boils down to your team's size, your technical comfort level, and what you need to get done.

If you're a startup or a small team managing just a handful of instances, the serverless EventBridge and Lambda combo often hits the sweet spot. It's quick to set up and gives you direct control over the code, making it a breeze to adjust as you grow.

But if you're a larger company trying to wrangle hundreds of instances across different AWS accounts and regions, the AWS Instance Scheduler solution brings the structure and scale you need. Its tag-based system and centralized setup are built from the ground up for those kinds of complex environments.

Comparing Key Factors

Let's break down the differences to make the choice clearer. Think about where your organization lands on these key points.

- Setup Complexity: The Lambda route is definitely faster for a simple schedule. The Instance Scheduler needs you to deploy a full CloudFormation stack. It’s more work upfront, but you end up with a complete scheduling system.

- Maintenance Effort: With a custom Lambda function, that code is yours to maintain, for better or worse. The Instance Scheduler is a managed solution, which means AWS takes care of the underlying plumbing, cutting down on your long-term headaches.

- Scalability: A Lambda function can certainly scale, but the Instance Scheduler was purpose-built for massive, multi-account operations with tricky scheduling demands.

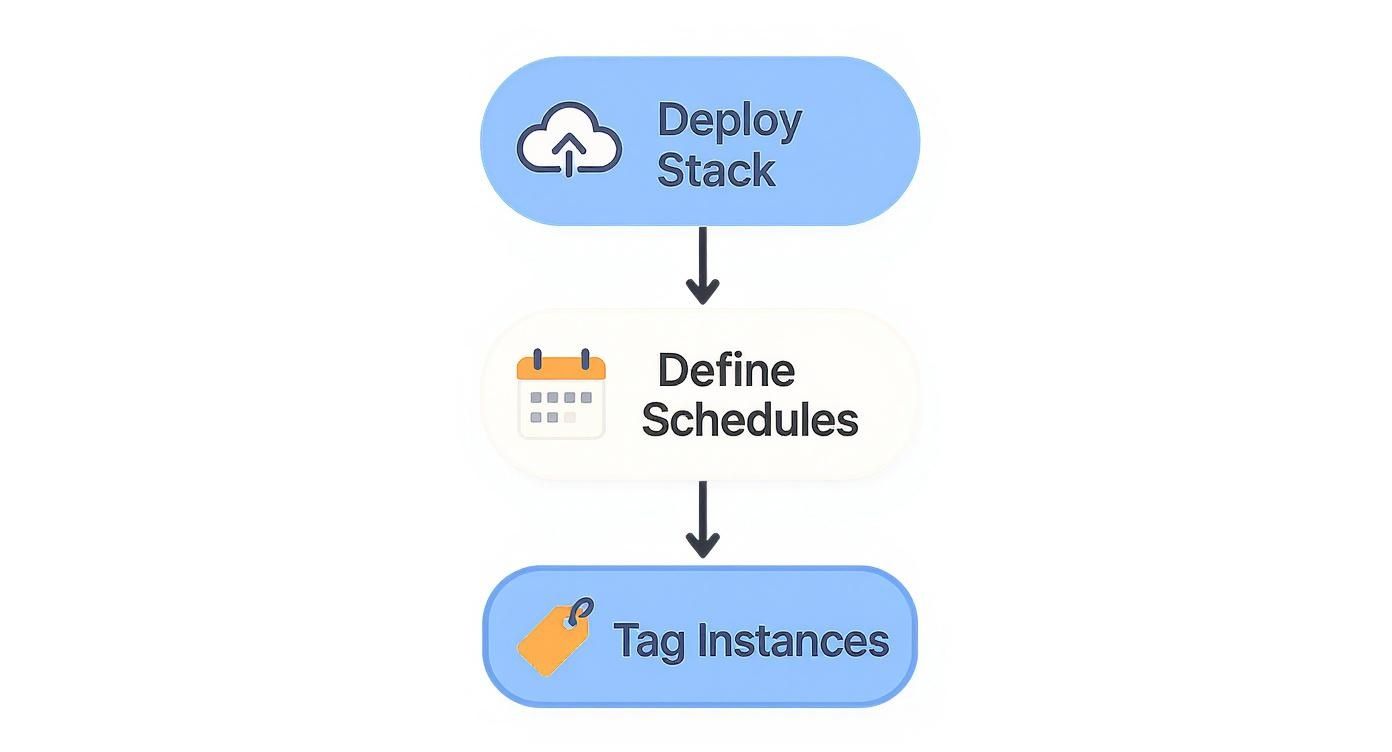

The process for the AWS Instance Scheduler is actually pretty straightforward once it's deployed, as this diagram shows.

As you can see, after the initial deployment, your day-to-day work is just defining schedules and tagging instances. That’s a management model that scales really well.

A Quick Comparison Table

To help you decide at a glance, here’s a side-by-side look at the two main approaches.

Comparison of AWS Instance Scheduling Methods

| Feature | EventBridge + Lambda | AWS Instance Scheduler Solution |

|---|---|---|

| Best For | Small teams, simple schedules, full code control. | Large enterprises, complex schedules, multi-account needs. |

| Setup | Quick and simple for basic start/stop functions. | More involved; requires deploying a CloudFormation stack. |

| Maintenance | You own and maintain the Lambda code. | Managed by AWS; less operational overhead for you. |

| Scalability | Good for single-account, but can get complex. | Built for large-scale, cross-account, and cross-region operations. |

| Flexibility | Highly flexible; you can code any logic you want. | Flexible through pre-defined schedules and tagging rules. |

| Cost | Very low; you only pay for Lambda invocations. | Low; you pay for the underlying resources (Lambda, DynamoDB). |

Ultimately, both are cost-effective, so the decision really comes down to your operational preferences and scale.

Making the Final Decision

At the end of the day, your choice depends entirely on your specific situation. If you love having granular control and your scheduling needs are straightforward, the DIY serverless method is a fantastic, low-cost way to start. It lets you build exactly what you need.

If your main goal is a standardized, scalable system that can be managed from a central point without writing a bunch of custom code, the AWS Instance Scheduler is the way to go. You trade a bit of upfront setup time for long-term operational sanity and enterprise-level features.

This should give you a solid framework for picking the right tool for the job. For a deeper dive, check out our article on whether you should use cloud-native instance scheduler tools, where we explore this topic in more detail. Each approach has its place; the trick is matching the tool's strengths to your organization's real-world challenges.

Scheduling Best Practices and Common Pitfalls

Setting up a basic aws schedule instance command is one thing, but making it reliable, secure, and truly effective is another. To avoid future headaches, you need to build some smart habits into your automation from day one.

The bedrock of any solid scheduling strategy is a consistent tagging policy. It doesn't matter if you're using a custom Lambda function or the AWS Instance Scheduler, these tools rely entirely on tags to figure out which resources to start and stop. A messy, inconsistent tagging approach is a surefire way to miss instances and burn money.

Handle Stateful Applications Gracefully

One of the most common mistakes is to just yank the plug on instances running stateful applications. Think databases or in-memory caches. Abruptly stopping them is a recipe for data corruption or outright loss.

The fix? Use shutdown scripts to give your application a heads-up before the instance goes down.

Before the instance stops, a script can trigger a database dump, save session data to an S3 bucket, or flush a cache to disk. This ensures your application can resume exactly where it left off when it starts up again.

This simple step transforms a potentially destructive action into a safe, routine operation. It's absolutely critical for keeping data safe in non-production environments that need to act like the real thing.

Monitor and Secure Your Automation

You can't just "set it and forget it." You have to know when your automation fails. Setting up CloudWatch alarms is a non-negotiable step for building a reliable system. For example, you can easily create an alarm that pings you via SNS if your scheduling Lambda function throws an error.

Keeping an eye on scheduled events for your EC2 instances is key to maintaining stable workloads. In a global survey of AWS customers, 70% said that using the EC2 console for this helped them slash unplanned downtime by 40%. You can read more about viewing scheduled events in the AWS documentation.

Finally, always stick to the principle of least privilege for the IAM roles your automation depends on. The role should only have permissions to start, stop, and describe instances, plus write logs. That's it. This dramatically shrinks your security risk if the credentials were ever compromised.

Keep an eye out for these other classic mistakes, too:

- Time Zone Mismanagement: For teams spread across the globe, hardcoding a single time zone invites chaos. Always use UTC for your schedules or pick a solution that explicitly supports multiple time zones.

- Ignoring Autoscaling Groups: Scheduled actions don't work on individual instances inside an Autoscaling Group by default. The right way to do it is by adjusting the group's desired capacity, not targeting the instances themselves.

Frequently Asked Questions

When you start digging into scheduling AWS instances, a few common questions always seem to pop up. Let's walk through the most frequent ones I hear from teams implementing cloud automation.

Can I Also Schedule Amazon RDS Instances?

Yes, you absolutely can. The same scheduling logic applies just as well to Amazon RDS databases as it does to EC2 instances. You can use either the official AWS Instance Scheduler solution or your own custom EventBridge and Lambda functions to manage RDS start/stop times.

The main difference is in the specific API calls your code needs to make. Instead of start_instances, you’ll be using startDBInstance and stopDBInstance. Your IAM role will also need a separate set of permissions for RDS actions, which are different from EC2 permissions. The good news? The AWS Instance Scheduler solution supports RDS right out of the box, which simplifies things quite a bit.

What Happens to My Data When an Instance Is Stopped?

This is a really important distinction to get right. When you stop an EC2 instance, any data stored on attached Elastic Block Store (EBS) volumes is completely safe. Think of it like shutting down your laptop, the hard drive is untouched. When you power it back on, all your files and applications on the EBS volume will be exactly as you left them.

The critical thing to remember, however, is that any data on instance store volumes is gone for good. These volumes are ephemeral, meaning they get wiped clean every single time the instance is stopped, hibernated, or terminated. For anything you need to keep, always use EBS.

How Do I Handle Jobs That Need to Run and Then Shut Down?

A fixed daily schedule doesn't work for everything. What about a batch processing job that might run for 20 minutes one day and three hours the next? You definitely don't want your scheduler pulling the plug mid-task.

For these kinds of task-based workloads, you're better off using a workflow orchestration service.

- AWS Step Functions: This lets you build a visual workflow that can kick off an instance, run your script, wait for it to signal completion, and then trigger the stop or terminate action. It's perfect for managing the entire sequence.

- Systems Manager Automation: You can use runbooks here to automate the whole process. It's a robust way to handle these task-oriented jobs without relying on a simple timer.

Am I Charged for an EC2 Instance When It Is Stopped?

You are not charged for compute usage while an instance is in the stopped state. This is the entire reason scheduling is such a powerful cost-saving move, you're turning off the meter on the most expensive part of running the instance.

That said, you will continue to pay for any attached EBS volume storage. But since storage costs are usually just a small fraction of the compute costs, the overall savings from scheduling are still massive.

If you're looking for a simpler way to manage your instance schedules without deep diving into AWS services, CLOUD TOGGLE provides an intuitive platform to automate your start and stop times. With just a few clicks, you can set up schedules, grant access to your team, and start saving on your cloud bill immediately. Learn more and start your free trial at cloudtoggle.com.